Here you can find information and comments on current events:

Chinese scientists achieve quantum computational advantage

A research team, including renowned Chinese quantum physicist Pan Jianwei, announced Friday a significant computing breakthrough, achieving a quantum computational advantage. Pan is a professor of physics at the University of Science and Technology of China, focusing on quantum information and quantum foundations. As one of the pioneers in experimental quantum information science, he has accomplished a series of profound achievements, which has brought him worldwide fame. Due to his numerous progresses on quantum communication and multi-photon entanglement manipulation, quantum information science has become one of the most rapidly developing fields of physical science in China in recent years.

The team established a quantum computer prototype, named "Jiuzhang," via which up to 76 photons were detected. The study was published in Science magazine online. With this achievement, China has reached the first milestone on the path to full-scale quantum computing – a quantum computational advantage, also known as "quantum supremacy," which indicates an overwhelming quantum computational speedup. No traditional computer can perform the same task in a reasonable amount of time, and the speedup is unlikely to be overturned by classical algorithmic or hardware improvements, according to the team. In the study, Gaussian boson sampling (GBS), a classical simulation algorithm, was used to provide a highly efficient way of demonstrating quantum computational speedup in solving some well-defined tasks. The average detected photon number by the prototype is 43, while up to 76 output photon-clicks were observed. Jiuzhang's quantum computing system can implement large-scale GBS 100 trillion times faster than the world's fastest existing supercomputer. The team also said the new prototype processes 10 billion times faster than the 53-qubit quantum computer developed by Google. "Quantum computational advantage is like a threshold," said Lu Chaoyang, professor of the University of Science and Technology of China. "It means that, when a new quantum computer prototype's capacity surpasses that of the strongest traditional computer in handling a particular task, it proves that it will possibly make breakthroughs in multiple other areas." The breakthrough is the result of 20 years of effort by Pan's team, which conquered several major technological stumbling blocks, including a high-quality photon source. "For example, it is easy for us to have one sip of water each time, but it is difficult to drink just a water molecule each time," Pan said. "A high-quality photon source needs to 'release' just one photon each time, and each photon needs to be exactly the same, which is quite a challenge." Compared with conventional computers, Jiuzhang is currently just a "champion in one single area," but its super-computing capacity has application potential in areas such as graph theory, machine learning and quantum chemistry, according to the team.

(Cover image via University of Science and Technology of China)

As a partners in MDT international Sp.z.o.o., who we all are working on daily basis with AI software or created algorithms in business or in academy to improve our research we got interested in chess game as a form of entertainment and strategic skills improvement ability training.

Chess is an ancient game which have originated in 6th century of current era. Through centuries is gained a lot of public interest in people who wanted to spent time in interesting way to have a proper mind exercising and tactics, strategy training opportunity as well.

After deep blue computer have beaten in 1997 current World Chess Champion humanists and theoretic pholosophers started to be worried about the future of Artificial Intelligence or as we call it Deep Learning, Machine Learning. Below you can find a link to interesting article from the creators of most sofisticated currently chess gaming algorithm, called AlphaZero, created by Google subsidiary Deep Mind:,,I can’t disguise my satisfaction that it plays with a very dynamic style, much like my own!"

GARRY KASPAROV

FORMER WORLD CHESS CHAMPION

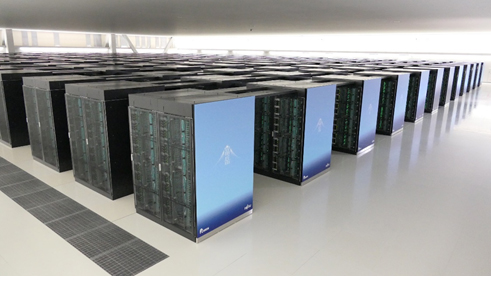

Fujitsu today announced that Fugaku(1), a supercomputer jointly developed by RIKEN and Fujitsu, was ranked No. 1 in the 55th TOP500 list of the world's supercomputers. Fugaku also took the No.1 position in the international ranking HPCG (High Performance Conjugate Gradient), which measure the processing speed of the conjugate gradient method(2) often used in practical applications including in the field of industry, and in the ranking of HPL-AI, which measures the performance of low-precision computing often used in AI such as deep learning.

These rankings were announced on June 22 at the ongoing virtual event ISC (International Supercomputing Conference) High Performance 2020 Digital.

The achievement of No. 1 in these three rankings indicates the overall high performance of Fugaku and demonstrates that it can sufficiently respond to the needs of Society 5.0(3) which aims to build a smart society that creates new value. Fugaku can contribute in such society as an information infrastructure technology accelerating the solution of social problems with simulation while advancing the development of AI technologies as well as technologies related to information distribution and processing.

Supercomputer Fugaku (in development and preparation)

The Fugaku system ranked first in the TOP500 list consisted of 396 racks (152,064 nodes(4), approximately 95.6% of the entire system), and the LINPACK performance was 415.53 PFLOPS (petaflops) with the computing efficiency ratio of 80.87%. It is the first time for a Japanese supercomputer to take the first place in TOP500 since the K computer claimed No.1 in November 2011 (the 38th TOP 500 list). Fugaku's performance is approximately 2.8 times that of the supercomputer ranked second in the TOP500 list with148.6 PFLOPS.

For this benchmark, 360 racks (138,240 nodes, approximately 87% of the entire system) of Fugaku were used to achieve the high score of 13,400 TFLOPS (teraflops). This proves that the supercomputer can efficiently handle such real-world applications in the field of industry and perform well. Moreover, Fugaku exceeds the performance of the No.2 supercomputer (2,925.75 TFLOPS) by approximately 4.6 times.

Unlike the conventional listings of TOP500 and HPCG which measure the performance of double-precision arithmetic logic unit, HPL-AI is a new benchmark established in November 2019 as an index for evaluating calculation performance that takes into account the capabilities of single-precision and half-precision arithmetic logic units used in artificial intelligence. For this measurement, a high score of 1.421 EFLOPS (Exa FLOPS) was recorded using 330 racks (126,720 nodes, approximately 79.7% of the entire system) of Fugaku.

This is also a historical record, as Fugaku achieved 1 exa (10 raised to the power of 18) in one of HPL benchmarks for the first time in the world. This proves Fugaku's capability to contribute to the advancement of Society 5.0, as a research platform for machine learning and big data analysis.

The TOP500 list is a project that regularly ranks and evaluates the top 500 fastest supercomputer systems in the world based on LINPACK performance. Developed by Dr. Jack Dongarra of the University of Tennessee, US, to solve a system of linear equations by matrix calculation, the LINPACK program was launched in 1993 to announce the supercomputer ranking two times a year (June and November).

LINPACK measures the computing power of double-precision floating-point numbers used in many scientific and industrial applications and to get a high score on this benchmark, it is necessary to run a large-scale benchmark for a long time. In general, a high LINPACK score is said to be a comprehensive measure of computing power and reliability.

The TOP500 has long been a popular benchmark for evaluating computing power, which was an important performance indicator for solving a system of linear equations composed of a dense coefficient matrix. More than 20 years have passed since the project was launched in 1993, and recently it has been pointed out that the performance requirements of actual applications are not met, and the time required for benchmark testing is prolonged.

Accordingly, Dr. Dongarra et al. proposed a new benchmark program, HPCG, that uses the conjugate gradient method to solve a system of linear equations composed of a sparse coefficient matrix, which are often used in industrial applications. Following the announcement of measurements on the world's leading 15 supercomputer systems at ISC 2014 in June, the official ranking was announced at the International Conference for High Performance Computing, Networking, Storage, and Analysis (SC14) held in New Orleans, US, in November.

The TOP500 and HPCG have ranked supercomputers in terms of computational performance for solving a system of linear equations. In both cases, it was stipulated in the rules that only double precision arithmetic (16-digit floating point number in 10), which has been widely used in scientific and technological calculations as well as industrial applications, should be used for calculations.

In recent years, more computers, equipped with GPUs or AI dedicated chips, are adding a large number of low-precision arithmetic logic units (5 or 10 digits in 10) to increase their performance. Since these high-performance computing capabilities are not reflected in the TOP500 list, Dr. Dongarra et al. improved the LINPACK benchmark by allowing the use of low precision calculations and proposed a new benchmark, HPL-AI, in November 2019.

HPL-AI allows LINPACK to perform low-precision computations when solving a system of linear equations using LU decomposition(5). However, since the calculation accuracy is inferior to that of double precision calculation, it is required to obtain the same accuracy as double precision calculation by a technique called iterative refinement(6). In other words, it's a two-step benchmark. As the HPL-AI rules were issued in November 2019, this is the first announcement of the benchmark ranking.

Ten years after the initial concept was proposed, and six years after the official start of the project, Fugaku is now near completion. Fugaku was developed based on the idea of achieving high performance on a variety of applications of great public interest, such as the achievement of Society 5.0, and we are very happy that it has shown itself to be outstanding on all the major supercomputer benchmarks. In addition to its use as a supercomputer, I hope that the leading-edge IT technology developed for it will contribute to major advances on difficult social challenges such as COVID-19.

I believe that our decision to use a co-design process for Fugaku, which involved working with RIKEN and other parties to create the system, was a key to our winning the top position on a number of rankings. I am particularly proud that we were able to do this just one month after the delivery of the system was finished, even during the COVID-19 crisis. I would like to express our sincere gratitude to RIKEN and all the other parties for their generous cooperation and support. I very much hope that Fugaku will show itself to be highly effective in real-world applications and will help to make Society 5.0 a reality.

The Fugaku supercomputer illustrates a dramatic shift in the type of compute that has been traditionally used in these powerful machines, and it is proof of the innovation that can happen with flexible computing solutions driven by a strong ecosystem. For Arm, this achievement showcases the power efficiency, performance and scalability of our compute platform, which spans from smartphones to the world's fastest supercomputer. We congratulate RIKEN and Fujitsu for challenging the status quo and showing the world what is possible in Arm-based high-performance computing.

A new ISO guide will help ensure climate change issues are addressed in every new standard.

Never before have we been so aware of our environment as during the recent lockdown period. Birds were singing, the air was clear and skies were bluer than blue. We also became more aware that our current economic model has caused temperatures to rise and that this will continue to create havoc with our weather – and our communities. The resulting climate change is real and we must address its impact by acting now.

With this in mind, ISO’s Climate Change Coordination Task Force (CCC TF7) has recently developed a new guide for standardizers so that climate change is taken into consideration with every new standard that is written. ISO Guide 84, Guidelines for addressing climate change in standards, provides a systematic approach, relevant principles and useful information to help standards writers address climate change impacts, risks and opportunities in their own standardization work.

Nick Blyth [1], Convenor of CCC TF7 that developed the guide, said every industry needs to mitigate and adapt to climate change.

“Importantly, Guide 84 can help to raise awareness and understanding across the whole standardization community, not just those involved in sustainable development standards,” he said.

“It is relevant to standards used widely in many industries, thus ultimately helping organizations build resilience and preparedness to future climate impacts as well as addressing low-carbon transition risks and opportunities.

“What’s more,” he added, “the guide encourages the revision of existing standards if they do not already consider climate change issues, so that there is greater movement towards sustainability everywhere.”

Use of the guide will also result in greater contribution to the achievement of the United Nation’s 17 Sustainable Development Goals.

ISO Guide 84 was developed by CCC TF7, which is part of ISO’s Technical Management Board, and is a companion to ISO Guide 82, Guidelines for addressing sustainability in standards.

FIRST MODERN PANDEMY:

The coronavirus pandemic pits all of humanity against the virus. The damage to health, wealth, and well-being has already been enormous. This is like a world war, except in this case, we’re all on the same side. Everyone can work together to learn about the disease and develop tools to fight it. I see global innovation as the key to limiting the damage. This includes innovations in testing, treatments, vaccines, and policies to limit the spread while minimizing the damage to economies and well-being.

Source: www.gatesnotes.com